Science + Society

Particle

Physics

Particle

Physics

Particle

Physics

Everything that we can see and touch is made up of particles, the basic building blocks of nature. Particles like protons, neutrons, and electrons compose the atoms of the computer or phone you are touching right now. Photons are responsible for the glow emitted from the screen. These same photons, when produced by the Sun, are responsible for the light that shines in the sky.

Particle physics is the branch of science that investigates the nature and the behavior of these particles. It is one of the fundamental research fields: research that seeks to explore and explain nature at its essence. Understanding how particles interact can illuminate the innermost mechanisms of our universe. We can study how the big bang gave birth to matter, how stars behave, how cosmic rays travel from distant stars to our Earth, and how the universe itself evolves.

Fundamental research fields explore the structure and behavior of the universe for the advancement of human knowledge, but that doesn’t mean they don’t have practical applications that affect peoples’ lives. Disciplines that conduct fundamental research form the base of a knowledge pyramid: their discoveries provide a foundation for practical applications developed by other disciplines. Research spinoffs and collaborations between researchers and industry bring further development with new, sometimes world-changing, applications.

Part 01

The Science of Particle Physics

Since ancient times, people have wondered what the objects arounds them were composed of. Around the 4th century B.C., the Greek philosopher Democritus coined the term “atomos” to indicate the smallest indivisible part of something—but his concept was abandoned for hundreds of years, and it wasn’t revived until the early 19th century, when the English chemist and physicist John Dalton proposed an atomic theory of matter. After he confirmed his theory through experiments on gases, other physicists started investigating the structure of matter at a smaller scale, discovering complex substructure. Today we know that the modern atom is not the smallest component of matter, nor is it indivisible.

Different materials are made up of different atoms. How those atoms organize themselves determines a material’s properties. That, in other words, is chemistry. If we looked closely at one of those atoms, we would see many electrons occupying the space around a tiny but heavy nucleus; the interactions of these atoms, and how they tie together, is the subject of a field known as condensed matter physics. The nucleus itself is composed of smaller components called protons and neutrons; the study of those and the forces acting between them is what nuclear physics addresses.

Going deeper, we can see that even smaller elements compose protons and neutrons. These are called quarks. So far we cannot divide quarks or electrons into smaller elements. We currently assume that those are the basic building blocks of nature—the fundamental elements of all matter. The study of these extremely tiny objects and of the forces which bind them is subnuclear physics, also known as particle physics.

The Fundamental Forces

One of the great achievements of physics is the identification of four fundamental forces that form the basis of all other forces and interactions we see in our universe. The basic forces are gravity, which prevents us from drifting up into the sky and binds planets and galaxies together; the electromagnetic force, which governs the flow of electricity through a cable, the magnetism pushing a compass’ needle, and the light illuminating night; and the weak and strong nuclear forces, which act at the atomic and nuclear level. The weak nuclear force is responsible for radioactivity and roughly one-half of the warmth produced in Earth’s core. The strong nuclear force binds atoms’ nuclei together, giving matter a stable form—without it, we wouldn’t be here right now!

We know today that the last three forces listed are carried out by particles: the intangible and massless photon is the carrier of the electromagnetic force; two particles, known by physicists as “W” and “Z,” carry out the weak nuclear force; and the particle called gluon is the bearer of the strong nuclear force. Studying these basic elements and forces leads to an understanding of the innermost mechanisms governing the universe.

Natural sources of particles

At the end of the 19th century, J.J. Thomson discovered the first fundamental particle: the electron. The discovery of the electron marked the beginning of particle physics. At the beginning of the 20th century scientists observed that particles were not only part of the structure of matter, but they were also produced by natural phenomena. Naturally produced particles were the focus of early experiments in particles physics, and many particles were discovered this way, for example the positron and the muon.

Radioactive elements have unstable structures which cause them to spontaneously emit particles as byproducts of their internal readjustment. One of the most well-known is Uranium, a common fuel for nuclear power plants. Clock makers used to use Radium and Tritium to push the phosphor to glow in their glow-in-the-dark clock hands. Different radioactive atoms emit different kinds of particles including electrons, photons, and alpha particles (two protons and two neutrons bound together). Radioactive elements are naturally present in small quantities in the soil, and were the object of study by particle physics pioneers such as Henri Becquerel and Marie Curie.

Particles from the cosmos continuously hit our planet and strike the atoms in the Earth’s upper atmosphere, producing additional particles. Those particles are called cosmic rays. Among them, we find photons and electrons, but also muons—a sort of heavy electron—and positrons, the equivalent anti-matter of the electron. The particles produced in the collisions travel towards the planet’s surface. Many of them get trapped in subsequent collisions, some reach the ground, and others continue traveling undisturbed. Cosmic rays are harmless to us: humanity has evolved in this environment and the human body is accustomed to those particle showers.

Observing and studying particles

Particles are the smallest known objects in the universe, and are intangible like light. So how is it that scientists can observe, study, and manipulate them? Particles can’t really be seen, because seeing something involves sending a beam of visible light towards an object and observing light that bounces back. But particles are so small that no visible light bounces back, even when using the most powerful microscopes. We have to find other ways to “see” particles.

All particles behave differently when passing through matter. Electrons and photons rapidly lose their energy by continuously colliding with other material atoms until they get trapped. Protons travel through matter almost unnoticed until they are close to the end of their journey, when they lose all their energy in a burst and immediately stop.

Muons, on the other hand, can travel through layers and layers of any material, even through hundreds of meters of solid rock, without losing much of their energy. Scientists build labs, like the Gran Sasso laboratory in Italy, deep under mountains to shield sensitive experiments from cosmic muons. Neutrinos, another fundamental particle, interact extremely weakly with all other objects: they can pass through entire planets without stopping.

Source: Wikipedia.

Many particles have an electric charge. A charged particle behaves like the needle of a compass near a magnet: it points towards one of the magnet’s poles. Charged particles, like electrons or protons, are influenced by electromagnetic fields. An electric field can be used to push charged particles forward, while a magnetic field can make a particle move to one side or another, depending on the charge of the particle.

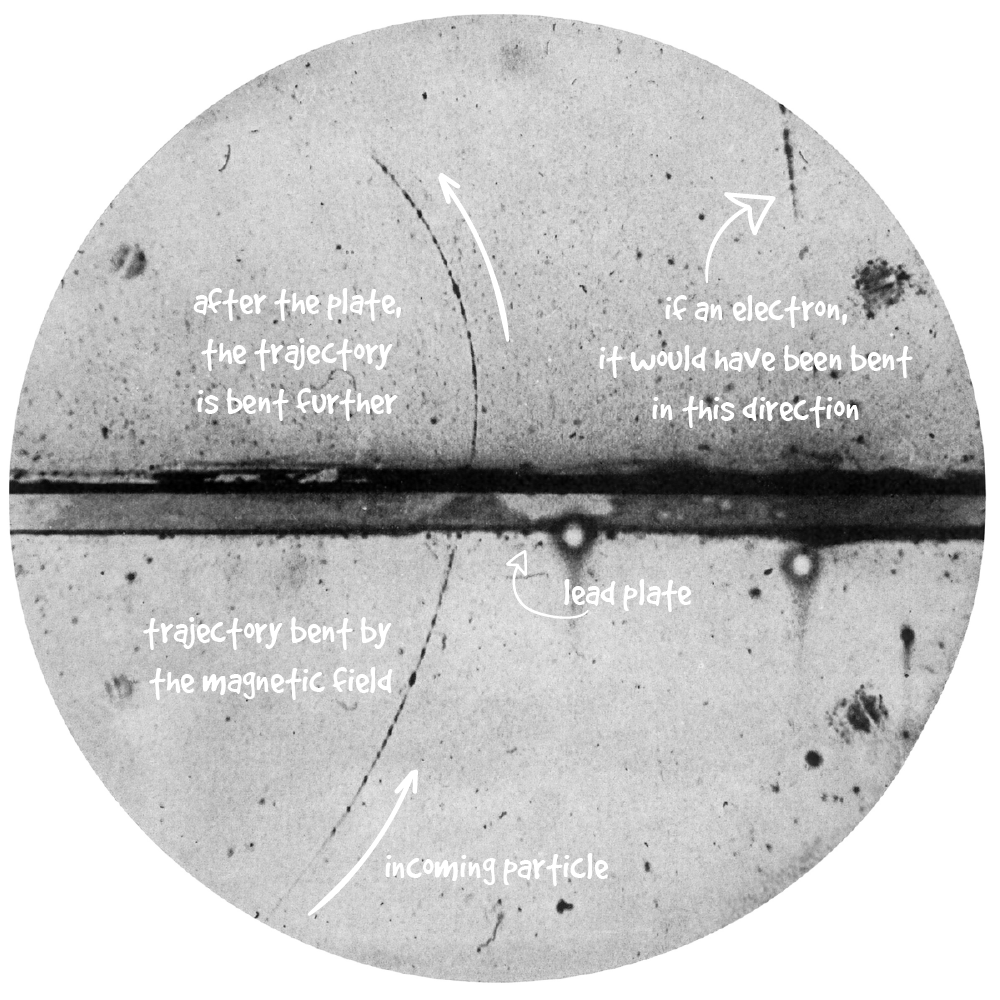

In 1933, Carl Anderson discovered the positron, an electron with positive charge. The discovery proved the existence of anti-matter.

Source: Wikipedia.

References: [1] and [2].

Arrow symbols by Freepik.

Anderson’s original journal paper, The Positive Electron, in which he announces the discovery of the positron, is an interesting read! You can freely read it online.

Also, if you like, you can take photos of cosmic rays too!

In 1933, while photographing cosmic rays with a Wilson chamber, Carl Anderson took a photograph of a particle passing through a thin film of photographic emulsion. The particle behaved almost exactly like an electron, except for the way a magnetic field around the film bent its trajectory. If it were an electron, the magnet would have bent the path in the opposite direction. Anderson had proof that this particle wasn’t an electron. What he observed was a positron, the anti-electron, and the first proof of the existence of anti-matter.

Accelerating particles

Electromagnetic fields influence the speed and trajectories of charged particles. An electromagnetic field can always be divided into two distinct components: an electric field and a magnetic field. The force exerted by the field on a charged particle is called the Lorentz force:

In the above formula, is the charge of the particle and its velocity, is the electric field, and is the magnetic field. For a given electric and magnetic field, the particle experiences a force from the field dependent on its charge and speed. But what if we could control the fields and ?

Rewriting the formula to isolate the velocity of the particle we find this:

The expression describes the velocity of a particle, , given the potential difference of an electric field. This means that creating a large voltage difference will increase a charged particle’s velocity.

The particle in the diagram has been drastically slowed down. In reality, the particle would instantly fly out of the page, since an electron put in a small accelerator (say, of 10 meters) and pushed by an electric field (say, of 100 Volts), will be accelerated to a speed of about 6 million meters per second—that is almost 22 million km/h! That speed, while fast on its own, is still much slower than the speed of light—the fastest possible speed in nature, as far as we currently know—, which is about 1 billion km/h!

Also notice how much more energy is needed to push a proton, which has the same electric charge as an electron, but contains a much higher mass. You have to choose the highest voltage setting to make it move!

If we instead rewriting the formula to isolate the radius of the bending of its trajectory, we find a description of the radius of the curvature of a particle’s trajectory, , given the speed of the particle and the strength of the magnetic field:

Means that a magnetic field can be used to steer the path of a charged particle.

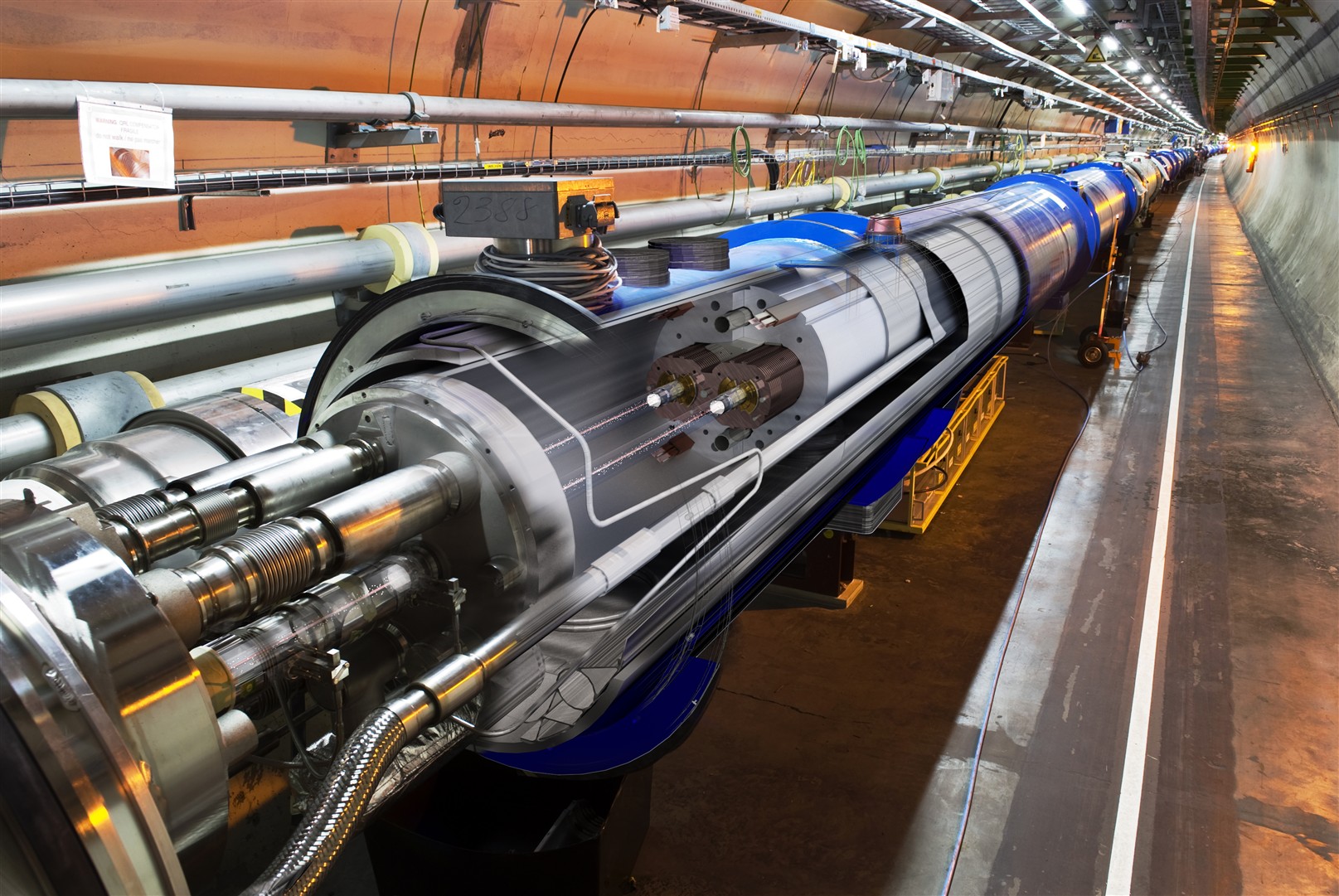

Using these two principles, physicists started building particle accelerators. By using accelerating modules one after another, we can build linear accelerators where the energy that these accelerators can produce is governed by their length. By combining accelerating and bending modules, we can build circular accelerators, in which particles are injected and forced to move in a circular path. Each time a particle goes around it is accelerated. After a given amount of cycles the particles attains their maximum energy and can be used for experiments.

Circular accelerators can be further divided into two main groups: cyclotrons, which were the first to be invented around 1930, and synchrotrons. The former can be seen in hospitals today. The latter are used in machines for cutting-edge research in particle physics, such as the Large Hadron Collider (LHC) at CERN.

Source: CERN.

Physicists designed accelerators to study the basic characteristics of particles, but it quickly became clear that they could have many other scientific and industrial applications. For example, old television sets were driven by cathode-ray tubes, which are nothing more than compact particle accelerators!

Electrons are produced and subjected to an electric field, causing them to fly towards a certain end of the tube. This end is covered by a layer of phosphorescent material that becomes luminescent when hit by electrons. The trajectory of the electrons is bent by a variable magnetic field, which forces electrons to hit the luminescent screen at different points. By modulating the intensity of the magnetic field, the electron beam paints an image on the screen.

Particle factories

So far, we have talked about particles produced in natural sources, but there are many more natural phenomena involving particles. All fundamental phenomena involving the electromagnetic force, for example, depend on the characteristics of electrons and photons—the elementary particle which is responsible for light. The stability of atoms, which compose all matter, depend on the interaction between two fundamental particles: quarks and gluons. These particles were discovered at the SLAC laboratory (US) at the end of the 1960s and at the DESY Laboratory (Germany) in 1979, respectively. The mechanism behind radioactive decay—which occurs in the cores of stars and planets like Earth—is determined by the behavior of the and particles, discovered at CERN in 1983.

Many fundamental mechanisms in the universe depend on particles which we haven’t discovered yet. This creates a number of interesting questions for modern physicists. For example, how did the universe form? At the beginning of time, in a fraction of a second, quarks and gluons bounced and smashed, interacting and recombining until creating the first glimpse of the matter we see today. And which particle, if any, is behind Dark Matter, which composes about 25% of the total energy of our universe? Is gravity carried by a particle like the other fundamental forces? And how are high-energetic cosmic rays that hit our planet generated, if there are no known sources for them near Earth?

To answer these questions, and others not yet asked, we need particles interacting at much higher energies and with a much higher flux than those we can obtain from natural sources like radioactive elements or cosmic rays. To make progress, we would need bespoke particle factories, to create large fluxes of particles at the desired energy to study interactions and effects. That’s where Einstein comes in.

In 1905, Albert Einstein proposed a theory about the equivalence of mass and energy. Contained in the well-known formula , his theory roughly states that an object has a certain amount of energy based on its mass, even when at rest. Conversely, if we create the exact amount of energy that corresponds to the mass of a specific particle, we can then create that particle and study it. Modern particle colliders are built for this: they accelerate particles up to the highest possible velocities and make them collide. The energy involved in the impact is used to create new particles which are caught by detectors and studied.

The particles created in colliders are not to be considered artificial: they are naturally found under specific conditions in other places, like stars, or confined in microscopic objects, like the nuclei of atoms. Using particle colliders, we are simply creating the right conditions for them to be observed in a laboratory. Although today’s particle accelerators are impressive, they don’t compare to natural particle accelerators found in outer space. Cosmic rays from outside the Milky Way create much more energetic collisions in the Earth’s upper atmosphere than our most powerful colliders.

Conducting experiments on particles

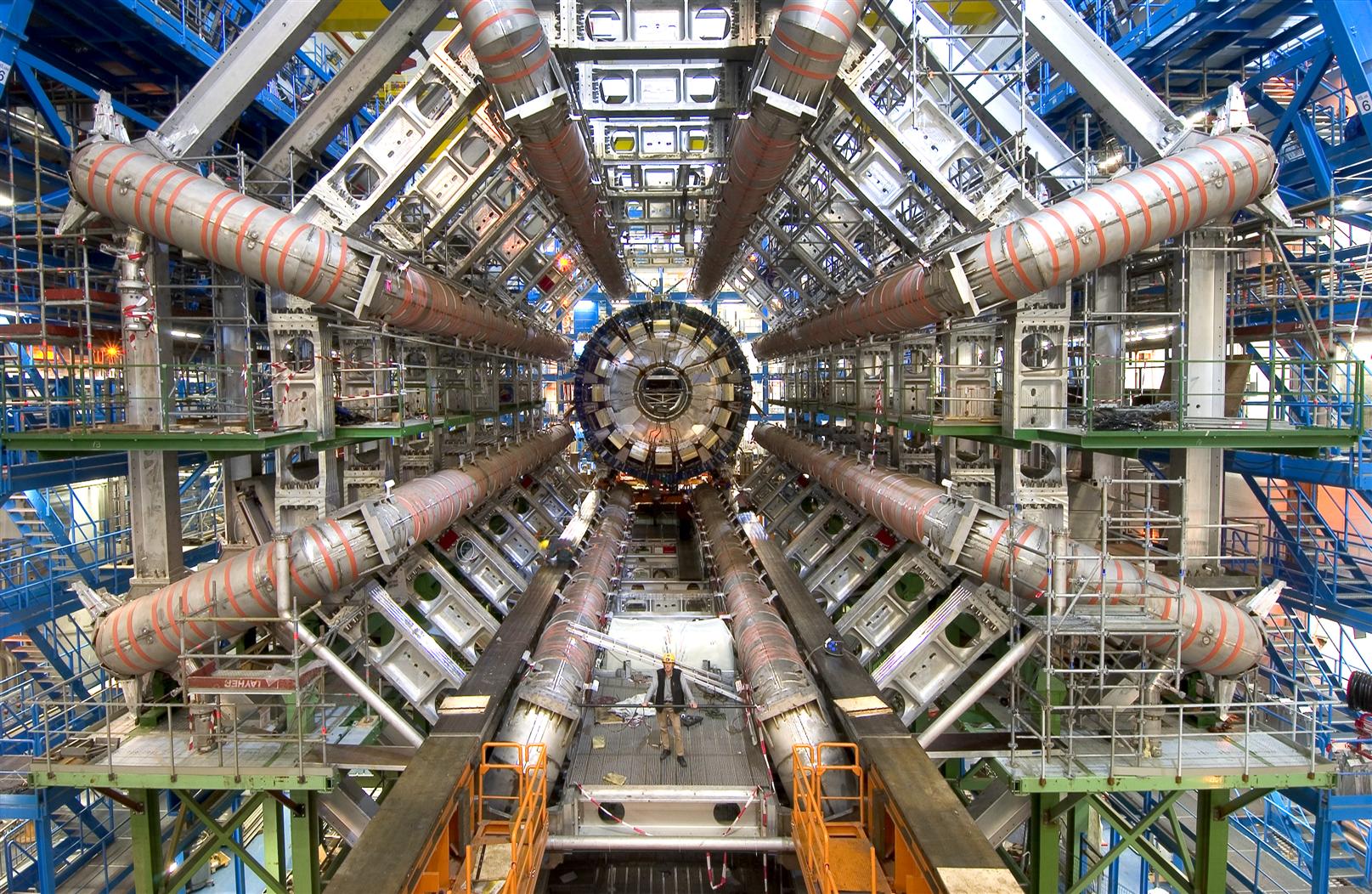

In a circular collider, two beams of accelerated particles smash into one another at a collision point. From the energy of that collision, new particles arise, which physicists can catch and measure. By identifying and measuring these particles, physicists can infer how they were generated. For that, particle physics experiments use many layers of different types of detectors placed around the collision point, since different particles require different detectors (different particles act differently when traveling through matter!).

In the simplified visualization above, three layers of detectors are placed one after the other. A magnetic field permeates the space around them. When a charged particle comes, the magnetic field bends its trajectory, as seen above, and makes it traverse the three detectors at different position. The detectors record the passage of the particle and send the information to the computers which handle the experimental data. From those data, scientists can reconstruct the trajectory of the particle in the experiment and, by knowing the strength of the magnetic field, the energy of the particle.

Modern particle physics experiments can be very large due to the amount of energy involved smashing particles together. When a collision occurs, many new particles are created which move outward from the collision at extremely fast speeds in all directions. To catch and measure these particles properly, scientists use layers of detectors and magnets placed around the collision point, so that they can reconstruct the particle trajectory after the collision for analysis.

Source: ATLAS Experiment, CERN.

In reality, many collisions occur and generate particles. The LHC, for example, smashes particles every 25 nanoseconds, producing about 40 million collisions every second! In each collision, thousands of particles are produced stemming from many different underlying phenomena. The work of experimental particle physicists concerns the design and development of bespoke hardware and software systems to effectively acquire and filter data from these collisions. With this data, they can identify and measure characteristics of particles and ultimately learn about the underlying mechanisms which generate them.

Part 02

Particle Physics in Society

Particle accelerators such as those at CERN and Fermilab are designed and built for cutting-edge scientific research, but there are many others which are used for very different, practical applications. Without noticing, you may have already interacted with particles accelerators and particle detectors. For example, if you have ever had a dental X-ray, or a PET scan, then you have experienced a compact version of a particle accelerator and detectors.

There are about 30,000 particle accelerators in the world today, almost half of which are used in medicine in applications such as radiotherapy, X-ray imaging, and particle therapy. Some are used for other purposes, such as producing semiconductors. In fact, only 1% of all particle accelerators running today are used for fundamental research.

Many technologies we commonly use today are the results of research spin-off from particle physics. These byproducts were developed to build and run experiments or to support the activities of the particle physics community. Two impactful examples include medical scanners used in hospitals and the World Wide Web. Both are indirect products of fundamental research conducted by particle physicists while conducting their primary research.

Medicine

Particle physics and medicine have a long history, dating back to some of the first particle physics experiments every conducted: observing particles that interact with our bodies. Thousands of low energy particles hit our skin every second without us noticing. Our skin acts as a barrier and is strong enough to stop particles, ultimately protecting our internal organs. But more energetic particles can pierce through our bodies. This was observed by early experimenters who saw the effects of energetic particles traveling through their hands and bodies while setting up experimental apparatuses. This discovery spawned hundreds of new experiments within medicine, whose results are still used today for many diagnosis techniques, such as radiographies and CT scans.

What these early pioneers did not know however, is that energetic particles release their energy during their path through our bodies, and produce different biological effects in the tissues and organs they traverse. If not properly controlled, energetic particles traveling through an organ can be very dangerous. But, if controlled and well-calibrated, these techniques can visualize sick organs or heal malignant tumors where traditional surgery cannot reach.

Today, medical physics is an independent branch of research, which studies, quoting CERN physicist Marco Silari, “the application of physics techniques to the human health.”

X-ray images

Many people get a radiography—that black and white image of bones or other organs—at least once in their lives, perhaps to confirm a broken bone or to inspect dental health. Radiography imaging, better known as X-rays, is a direct application of what was discovered from early experiments in particle physics.

X-rays (where the “X” stands for “unknown”, originally named for its mysterious origins) were found in 1895 by German physicist Wilhelm Röntgen. He discovered them while experimenting with a Crookes tube: the forerunner of the cathode-ray tube used in TV sets. Röntgen noticed a luminescent halo in the fluorescent screen far away from the tube, even when thick books and a thin metal plate were placed between them.

Röntgen realized that those rays (which have been later discovered to be photons of a certain energy) were absorbed differently by different materials. By placing a photographic plate behind the object to test, he could observe the level of darkness in the resulting image depending on the amount of energy that particles left in the object. Shortly after, Röntgen had a “Eureka!” moment when he realized the new “X”-rays could also pass through the human body, producing an image of what’s inside. He then took the first X-ray image, using his wife’s hand and her wedding ring as the subject.

Source: Wikipedia.

PET scanners

Besides X-rays, there are many other medical imaging techniques that apply physics techniques. Positron-emission tomography (PET), for example, is a nuclear medicine imaging technique, which comes directly from particle and nuclear physics research.

PET scanners use a radioactive isotope, called “tracer”, which binds to specific tissues in the patient’s body. The isotope then rapidly decays emitting positrons—positive electrons, as already discovered above. The positron travels a very short distance in the body before releasing its energy, and is eventually caught by an atom, where it meets an electron. When a positron, the anti-matter buddy of the electron, meets an electron, the two particles annihilate. That means that they “dissolve” during the interaction and a certain amount of energy is released, following Einstein’s mass-energy equivalence. The annihilation energy produces two photons of a given energy, which escape the patient’s body in opposite directions.

To catch the escaping photons, layers of particle detectors are placed around the patient—in the very same way particle detectors are placed around the collision point when studying the interaction between fundamental particles. The detectors around the patient—the big ring patients see when they lay down for the PET exam—detect the two escaping photons, measuring the time of arrival and the direction. A piece of dedicated software reconstructs the trajectory and the point of origin within the patient’s body.

The tracer collects in organs and tissues of higher chemical activity, often bound to certain disease. The areas where the tracer accumulates are the origin of a higher number of photons and show up as bright spots on the images from the PET scan. Cancer cells, for instance, have a higher metabolic rate than normal cells and, because of this, they appear as bright spots on a PET scan, helping doctors check the presence or the status of a tumor.

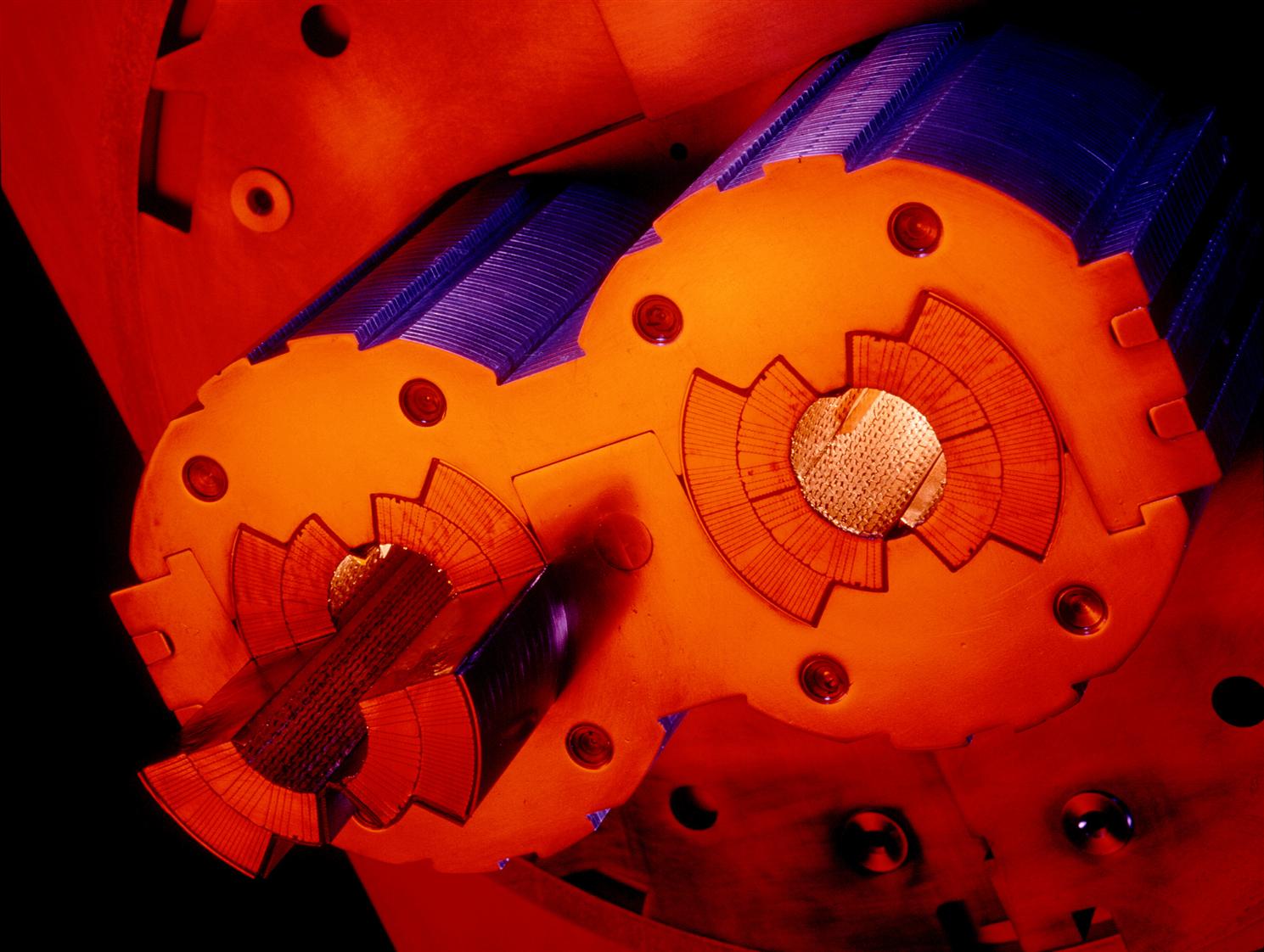

The radioactive isotopes used in PET scanners have to be produced in cyclotrons, small circular particle accelerators which combine an oscillating electric field and a static magnetic field that holds the particles to accelerate in a spiral path. As CERN scientist Yacine Kadi explains in one of his lectures, the accelerated particles are then driven to a specific target which, after irradiation, contains the desired radioisotope. The parameters like particle energy, beam intensity, target material, and time will determine the kind and the quantity of produced radioisotopes.

Decay after decay, samples of radioactive materials slowly “die”, emitting fewer and fewer particles. How slow they die it depends on the material itself. The half-life is a parameter that indicates the time needed for half of the atoms of the radioactive material to decay, on average; that, in practice, indicates the duration of the radioactive material in time.

Tracers with a shorter half-life must be used within a short delay from their production, therefore, they should be produced closed to the PET facility or to be transported there as fast as possible. PET radio-isotopes can be transported to maximum 3-5 hours distance only, which confines their production within the hospitals neighborhood.

Since the half-life of the produced radioactive tracers is quite short—short-lived tracers are chosen specifically to keep the radioactive dose absorbed by the patient as low as possible—, those have to be produced close to the PET scanner. One of the most used PET tracers is based on the radioisotope fluorine-18, whose half-life is of 110 minutes, which allows for medium-long transports. Another one is rubidium-82, with a half-life of 1.27 minutes, which is usually produced very close to the PET facility.

Given that the cost of operating a cyclotron, along with the equipment for the subsequent preparation of the radioactive tracer, are quite high, those are usually found only in large hospitals or in universities and research institutes which operate those facilities for different smaller hospitals simultaneously.

New frontiers of cancer therapy: hadron therapy

So far we looked at how particles are used as probes in medical imaging, but particles are used as therapies too. Beams of ionizing radiation—another name to indicate beams of energetic particles—damage the DNA of cancerous tissue leading to their cellular death.

In 1906, shortly after the discovery of radioactivity itself by Henri Bequerel, Pierre Curie suggested that tumors could be cured by inserting a radioactive source—usually emitting electrons or photons—, which causes them to shrink. The technique was called brachitherapy, from the Greek word brachys, which means “short distance”, to indicate that the radioactive source is placed in contact with the tumor. Common in the beginning of the 20th century, brachitherapy is still used today to treat different kind of tumors.

Another common cancer therapy which makes use of particles is radiotherapy, where X-rays are collimated and driven to the target organ or tissue. To deliver the planned dose, multiple treatments from different angles are usually given. Electron therapy uses beams of energetic electrons. Given their characteristics, beams of electrons do not penetrate deeply in the body tissues, so they are mainly used to treat skin tumors.

The drawback of all these techniques resides in the nature of the particles used for them. Because of the way energetic electrons or photons interact with matter (all matter, not just bodily tissue), much energy is released in the healthy tissue along a particle’s path through a patient’s body. This causes damage to the other tissue and organs near the tumor. Even with careful planning of the treatment, some energy will be released in the heathy organs around the sick ones, causing them to get damaged; and that is due to the very nature of the particles used for the treatment.

A new frontier in cancer therapy with particle beams is hadron therapy, which makes use of protons or heavier ions—that is, nuclei of heavier elements, like carbon. Because of the way hadrons interact with matter, they release the most significant part of their energy only at the end of their travel within a particular target; and the length of that path in the target depends on the initial beam energy. Thus, by fine-tuning the energy of the protons, we can make them hit almost only the tumor tissues, make them kill only the cancerous cells. Like a very precise “proton blade”.

Even though hadron therapy is still under active development, it is already used in some advanced medical centers around the world—like the Italian CNAO and the Swiss PSI in Europe; and the Northwestern Medicine Chicago Proton Center in the U.S.—to treat nasty tumors in children or in areas which are difficult to operate on surgically.

Research spinoffs

Unsurprisingly, particle colliders are complex structures that require the design and development of new technologies. Oftentimes, the materials and techniques developed for the experiments are created in collaboration with industry partners, which leads to further development and application for other fields.

Let’s look at an example in-depth, the LHC at CERN. Particles in the beam of the accelerator need to be confined and bent using force exerted by magnets. Accelerator physicists designed the LHC to attain a high energy, therefore they needed powerful magnets. To keep the magnets reasonably sized and with realistic power consumption, new superconducting magnets had to be invented. For these to work properly, they need to be cooled to a temperature of -271.25 ˚C (-456,25 ˚F), a temperature close to “absolute zero” and colder than outer space! To do this, innovative cryogenics were developed. Moreover, to ensure that accelerated particles keep their energy without smashing into air molecules, new vacuum pumping techniques were developed to suck out most of the air from the accelerator pipe. In other words, the beams of particles travel in an ultra-high vacuum, once again, in a place as empty as outer space!

Source: CERN.

Scientific findings are not always immediately used. But every bit of knowledge might be used eventually. As building blocks in a LEGO set, every piece of knowledge and research contributes to the foundation to what will come after them. For example, Marie Curie didn’t know what future technologies would benefit from her research on radioactivity, but all of today’s medical imaging techniques stem from her and her colleagues’ research. Neither did Einstein develop his theory of General Relativity expecting it to be used for the calibration of GPS devices. Fundamental research not only advances human knowledge, it enables the rise of new and advanced technologies.

Happy 30th birthday, World Wide Web!

Building particle accelerators is a large collaborative undertaking. Years ago, particle physicists and engineers were having trouble efficiently sharing data, distributing information, and collaborating as computers became more mainstream. To solve that, they invented the Web.

Whether you’re commuting to home or to work, sipping a drink at your favorite coffee shop, you’re reading this article on a screen. But these words are not stored on your device. When you open this article on your phone, tablet, or computer, in an instant a piece of software called the browser connects over the Internet to another machine sitting in a room somewhere around the world, called the server, and asks to download the article. The article is then passed from the server to your device, the client, and displayed on the screen to read. Clicking another link in this article will perform this process again, accessing other websites stored on other servers. This remarkably common yet amazing process is only possible due to a collection of technologies called the World Wide Web, or the WWW.

CERN’s LHC experiments are only made possible through the contribution of many countries and institutes.

The ATLAS experiment has around 3,000 scientific authors from 183 institutions around the world, representing 38 countries. Around 1,200 doctoral students are involved in the research, as well as many engineers, technicians, and administrative staff. The CMS experiment is similar.

These experiments are among the largest collaborative efforts ever attempted in science!

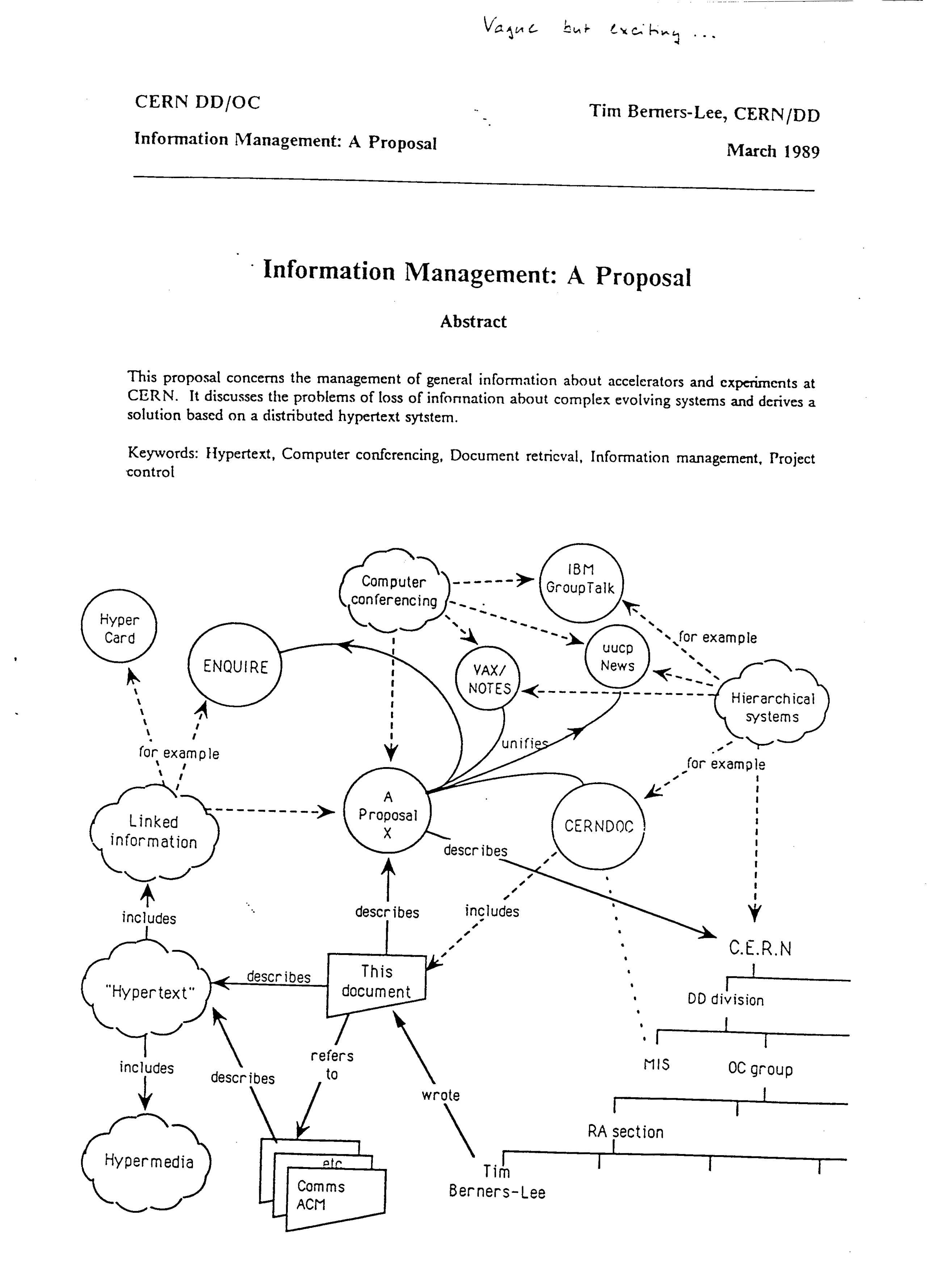

Before the Web could handle bank account balances, images, food orders, and all the other data we consume in our daily digital life, this mechanism was invented to handle particle physics data. At the end of the 1980s, a computer scientist at CERN, Tim Berners-Lee, was trying to find a solution to easily share information among the various institutes participating in the experiments.

30 years ago, in March 1989, Tim Berners-Lee presented to his supervisor, Mike Sendall, a proposal to develop a new “information management system,” designed to share information about the activities within CERN. At the same time, a CERN manager, Robert Cailleau, was looking for solutions to let the future LHC experiments efficiently share information.

Sendall passed Berners-Lee’s proposal to Cailleau, after having added a personal note on the cover page: “Vague but exciting…” Cailleau recognized that Berners-Lee’s idea could be a potential solution to distribute documents and data across institutes for future LHC experiments, and supported the development of Berners-Lee’s idea.

Source: CERN.

And thus, the WWW was born. The core software was released in the public domain, allowing the software to be freely re-used and adapted without any restriction. This decision was driven by Tim Berners-Lee’s vision for the WWW, and ultimately was its strength. During the following years, the WWW circulated throughout academic communities and slowly drew the attention of industry. After becoming standardized, it evolved to what we know today: the ubiquitous “www”, the backbone of our digital life.

A last word

Particle physics helps us unveil the mysteries of the universe, and has let us discern the basic components of matter and the basic forces which govern the universe as we know it so far. Like other fundamental sciences, practical applications aren’t the focus. Still, the discoveries made by particle physicists, and technologies developed to enable their experiments, have transformed our lives forever.